This wider variety of possible inputs and outputs makes it a harder task for AI.īecause of these challenges, AI experts lack consensus on how quickly, and even whether, computers will completely replace human transcribers.

A voice assistant like Alexa only needs to determine which, if any, of a predetermined list of vocal command is being uttered, whereas a transcription program needs to listen for, and capture, any utterance at all. Most people who use Siri or Alexa would agree that, while those tools do an admirable job of understanding a user most of the time, most of us wouldn’t trust them with our lives.Įven when an AI system learns to have high accuracy in the best case - for instance, a single clear-voiced speaker in a quiet room - maintaining that accuracy for multiple voices, multiple languages, heavy accents, background noises, crowded rooms, and more becomes very complicated.Īnd while voice recognition for vocal commands and voice recognition for transcription seem like similar problems, the latter is actually much more challenging. Accuracy is especially important when transcriptions are affecting the accuracy of quotes in news stories, the outcomes of expensive and important trials, or even the lives of patients While it might seem like a straightforward project for AIs, since it can be seen as simply converting one kind of data (sound) into another (text), in fact, various factors make voice transcription a significant computing problem. Although it’s hard to find statistics for transcription specifically, Grand View Research projects that the global voice recognition market overall will hit $127.58 billion by 2024. According to the Bureau of Labor Statistics, there were 57,400 medical scribes and 19,600 court reporters (which includes closed captioners for television and other media) in the United States in 2016. In addition, voice to text transcription has long been an important business on its own merits in the medical, legal, and media fields, to name a few, and has traditionally been done by teams of human transcribers who charge rates of $3 or $4 per minute. This makes reliable voice transcription an important goal for artificial intelligence. Instead, it’s in the form of spoken words on video and audio recordings or even live events. But much of the data in the world isn’t in text form. The return on investment is there, and I encourage CIOs and other health care executives to consider building these technologies into their strategic road maps.įorbes Business Council is the foremost growth and networking organization for business owners and leaders.Artificial intelligence - especially machine learning - is at it’s best when it is working with a large, analyzable data set, like text. AI holds a lot of promise in leaning out a number of processes and reducing the burden on already overtaxed physicians especially during Covid-19. health systems, using AI to transform the clinical documentation portion of care delivery can create more seamless experiences for both patients and physicians on the continuum of care and should be explored as part of the overall digital health strategy. This could mean an increase by up to a third of patient revenue per day, which amounts to a significant financial stream for provider organizations.īased on my nearly two decades of experience consulting with more than 20 U.S. Through the use of innovative technologies like voice recognition and NLP, physicians can recoup up to three hours per day back for direct patient care and in the service of healing.

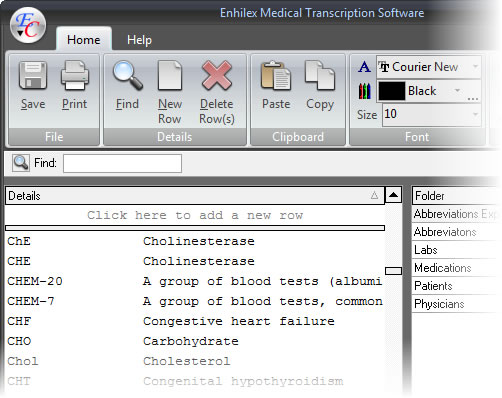

transcription market size is approximately $20 billion (led by its use in health care) and is bolstered by this transition from traditional to AI-powered solutions, with voice recognition playing a significant role in this forecast. In addition, physicians can also use this technology from within Microsoft Teams videoconference calls to document telehealth visits. This documentation of encounters in real-time negates the need for manual data entry or human transcription. Nuance's latest acquisition, Saykara, uses an AI voice assistant via a mobile application on the physician’s cell phone to transform salient conversational content between physicians and patients into clinical notes, prescription orders and referrals, and then populate both structured and narrative data directly into EHRs. For example, there are types of software that use neural networks to map patient-physician conversations into a note in the EHR (AI-based speech-to-text system) but require wall-mounted devices with microphones to record each interaction. There are a significant number of market entrants in this space.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed